I don’t think so.

Me neither. That is why I didn't understand your post but will admit maybe I misunderstood. Thanks for the clarity.

Follow along with the video below to see how to install our site as a web app on your home screen.

Note: This feature may not be available in some browsers.

I don’t think so.

I think you understood correct,upy.Me neither. That is why I didn't understand your post but will admit maybe I misunderstood. Thanks for the clarity.

I've learned quite a few things anyway.I would say that he saw a potentially interesting but untold story. From the length of the post I’d say his story provided interesting reading and stimulated a good discussion.

There is a reason why 99% of research scientists who do this for a living would see these results and say we can't say we have learned anything from them. If they repeated each of the designs over and over and then quantified the results, then we could run stats (can't say which test because we don't have the distribution of the data after repetition) and get some values that would be useful. But one and done runthroughs don't tell us anything. For example, if it rained all day on Tuesday here, and never again for the year, but Tuesday was my only sample, I would think that I live in a rainforest by seeing that 100% of the time it was raining. So each of these results could have been that Tuesday, or maybe two Tuesdays, and so on.

Right, the main ideas I want to pull from it are not conclusions about any one tank or start method. I think there's a lot of things we can get from the data that are more general - of the form "all the tanks subject to this effect, followed this path." Those are much more interesting to me than specifically how well a bag of oceandirect live sand did, etc.

Not sure if I understand your analogy here, there were 12 different set ups tested at 4 diferentes time periods meaning a total of 48 tests have been performed (51 if we include the older system tested in brs).I agree that this is being looked into way too much. What I see when I look at their results is that I have learned nothing from the video. Why? Because its not a good experiment by the standards of what is required to be confident in the results. I see it all as events that occurred by chance in unusual environments. The length of this thread is concerning me because again, people in this hobby have little to no experience with properly designing experiments and statistically analyzing results. There is a reason why 99% of research scientists who do this for a living would see these results and say we can't say we have learned anything from them. If they repeated each of the designs over and over and then quantified the results, then we could run stats (can't say which test because we don't have the distribution of the data after repetition) and get some values that would be useful. But one and done runthroughs don't tell us anything. For example, if it rained all day on Tuesday here, and never again for the year, but Tuesday was my only sample, I would think that I live in a rainforest by seeing that 100% of the time it was raining. So each of these results could have been that Tuesday, or maybe two Tuesdays, and so on. These are fun anecdotal observations and we need to stop reading into these as if we have any real results. This whole error is, imo, the main issue when hobbyists attempt to move their hobby thoughts into scientific thought.

Dang! You changed my perspective again!! That is the teacher in you. Maybe Taricha should be changed to SocratesI've learned quite a few things anyway.

Just to be a little more clear about my point of view: I don't have to accept most of the conjectures in the BRS videos, nor do I have to think everything measured by aquabiomics is meaningful in order to think that this large set of data is worth looking at.

If I were just aiming to illustrate flaws in the tests and the conclusions, it would be a short and pointless thread.

I appreciate the frequent cautions about concluding too much from single tests - really, I do. And I'll continue to avoid doing such a thing, like I said earlier....

Let me explain a bit more how I'm looking at the data. (though it's totally fine to continue to disagree with my perspective.)

Let's say we take 12 otherwise similar test subjects and send them out each one in the rain wearing a different color pair of socks, then we get back the totally shocking data that all of them got wet.

Scientist A says: we can conclude nothing because we have no replicates - we needed two or 3 people in blue socks, two or 3 in red socks, same for green etc.

Scientist B says: Why? We already have 12 replicate results that say sock color provides no protection against getting wet in the rain.

The BRS data I'm highlighting is basically like that. I'm focusing on areas where the tank results tracked in very much the same way across a number of initial conditions. They may have been intended to end up differently - but that ended up as replicates with respect to the data in question.

To be concrete (Idea 3): zero of 12 tanks achieved rapid diversity. They were sampled 4 times over 15 weeks. Only one hit 50th percentile diversity by week 15. How many more replicates of these starting setups do you want to see to be able to conclude that these materials set up in this way are unlikely to rapidly ( ~4weeks) yield a high diversity tank?

I say we already have 12 replicates that tell us this is the case.

Don't let perfection be the enemy of the good, but be sure to point out what the good is missing.I've learned quite a few things anyway.

Just to be a little more clear about my point of view: I don't have to accept most of the conjectures in the BRS videos, nor do I have to think everything measured by aquabiomics is meaningful in order to think that this large set of data is worth looking at.

If I were just aiming to illustrate flaws in the tests and the conclusions, it would be a short and pointless thread.

I appreciate the frequent cautions about concluding too much from single tests - really, I do. And I'll continue to avoid doing such a thing, like I said earlier....

Let me explain a bit more how I'm looking at the data. (though it's totally fine to continue to disagree with my perspective.)

Let's say we take 12 otherwise similar test subjects and send them out each one in the rain wearing a different color pair of socks, then we get back the totally shocking data that all of them got wet.

Scientist A says: we can conclude nothing because we have no replicates - we needed two or 3 people in blue socks, two or 3 in red socks, same for green etc.

Scientist B says: Why? We already have 12 replicate results that say sock color provides no protection against getting wet in the rain.

The BRS data I'm highlighting is basically like that. I'm focusing on areas where the tank results tracked in very much the same way across a number of initial conditions. They may have been intended to end up differently - but that ended up as replicates with respect to the data in question.

To be concrete (Idea 3): zero of 12 tanks achieved rapid diversity. They were sampled 4 times over 15 weeks. Only one hit 50th percentile diversity by week 15. How many more replicates of these starting setups do you want to see to be able to conclude that these materials set up in this way are unlikely to rapidly ( ~4weeks) yield a high diversity tank?

I say we already have 12 replicates that tell us this is the case.

Don't let perfection be the enemy of the good, but be sure to point out what the good is missing.

Fair enough. I can agree that I'm stretching or abusing the term "replicates" in this sense.Strictly speaking, there are no replicates in the BRS study and the controls are inadequate to easily conclude much from the study. There are replicates in the sense that repeated car crashes at an intersection with no stop sign is a replicate event. There is data present but the analysis of it takes on the form of an investigation rather than a statistical analysis. The BRS experiments have a messy design, not even qualifying as a highly fractional factorial design. They are car crashes. As a result what conclusion or narrative we tease out from that data will be speculative and potentially contentious. As long as we keep this mind, we can have fun rationalizing the wreckage.

I am obviously a little late to this party thread, so trying to catch up. While it is an interesting discussion and test, it really only covers the "break in" period where the different surface competitors are battling it out.Let me expand a little more on Balance Score , Diversity and visual judging of the systems.

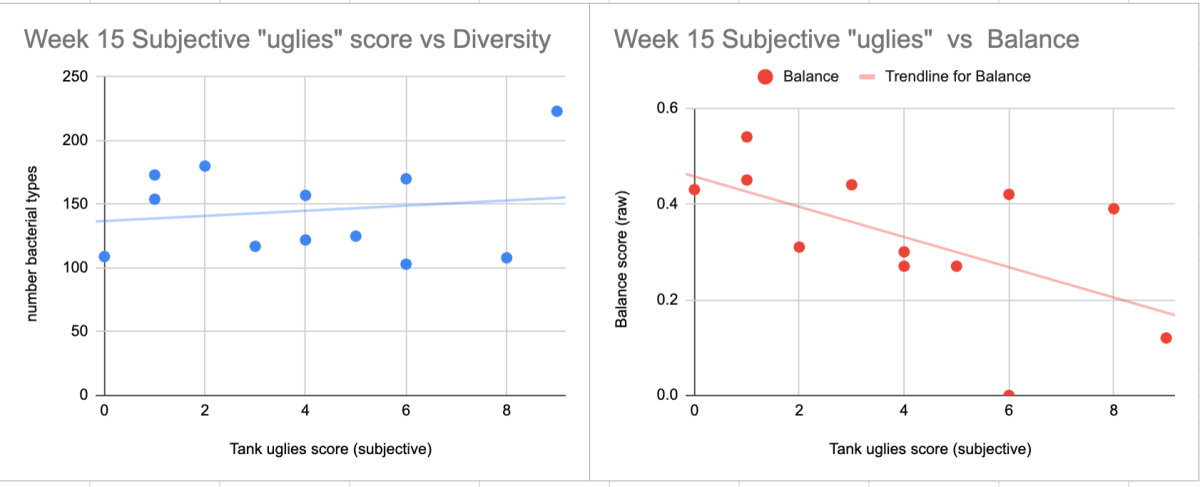

From watching the videos I tried to give each tank a subjective "uglies score" based on amount and apparent "health" of the nuisance growth at week 15. I rated each tank zero to 10, with zero being spotless and 10 being the most uglies.

You can see how the diversity doesn't reflect the eyeball assessment of uglies (not that it's meant to, but people wonder if it does). The balance on the other hand does seem to correlate decently with the visual assessment of uglies.

Additionally in terms of correlations - the systems that dropped most notably in balance percentile from weeks 10 to 15 also had an increase in uglies during that time period.

12 - biobrick

9 - gulf wet rock

5 - Coral

and 7 - tank rock/sand

...all had notable increases in nuisance growth from weeks 10-15, which can help to explain one way a system that has better "balance" score can regress on that metric during that time frame.

Another potential context -- and one I am continually involved in over on the SPS Forum -- is the one where five people per day ask "When will I be able to keep acropora alive in my tank?" I typically answer the question with a question: "Was this a dead rock start?" and then go from there.

One thing both views (diversity and balance) show is that there are quantifiable answers to a question that we often pose. Cycling takes 1-2 weeks, but we feel like tank "maturity" takes much longer - on the scale of months. But we don't have good answers to what we think happens between 2 weeks and a few months. One of those answers is bacterial succession is clearly a much slower process than a couple of weeks.

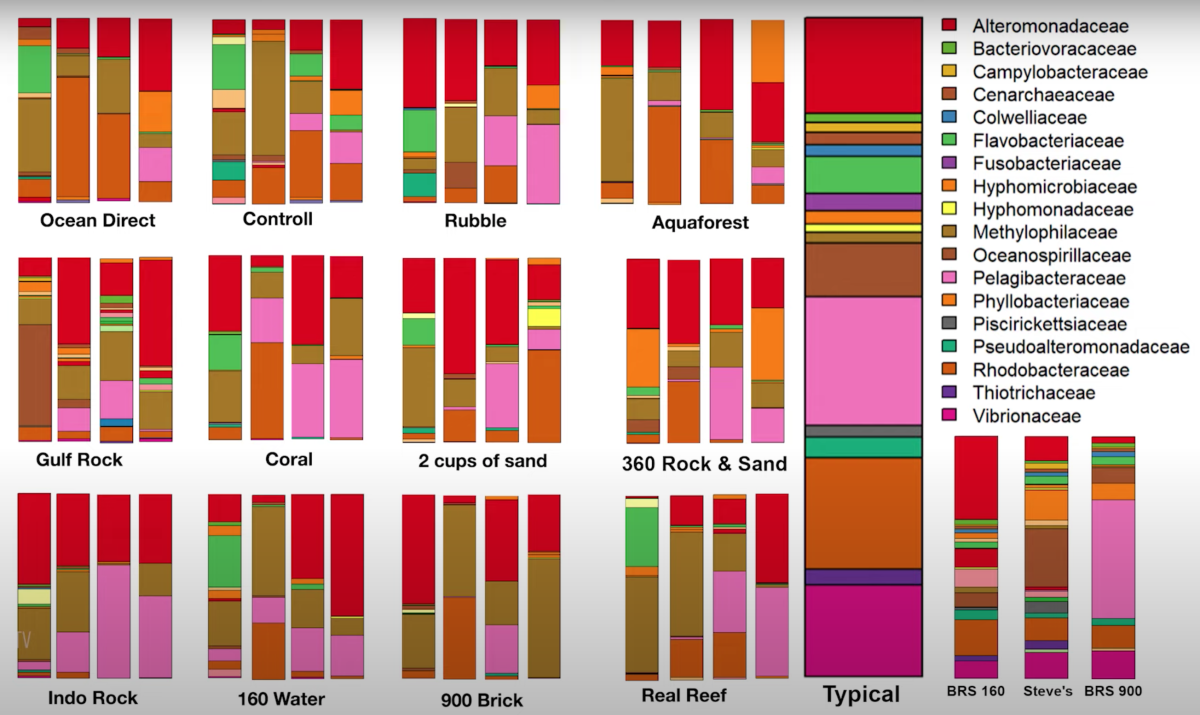

This is a snapshot of all 4 microbiome tests over 15 weeks on all 12 tanks... No tanks are totally settled across any time frame within this 15 week period. Some are in more flux than others.

but it does make me wonder if that the old school practice of putting live rock in big dark tubs circulating in saltwater for months might not have been crazy at all.

12 months just gets you to the cheap wine stage for dry rock. LOL.They needed additional tests both of which require time and money. Live rock and sand being one. The second being what you touched here. Dry rock aged like wine. Test at 3, 6, 9, and 12 months.

Still a head scratcher to me though I'll have to admit. Why try and shorten the journey as reefs take years to mature. Unless of course shorter means selling more gear due to failures or trying to keep up with Mr. Jones.

I think this thread has shown that live rock might just be cheap wine in a fancy bottle with a high price tag.12 months just gets you to the cheap wine stage for dry rock. LOL.

As promised, here is a data visualization of the most populous bacteria families (6) that made up 90% or more of the families found in each BRS experiment at each of the four time points. What is actually plotted is the difference between the population make up in the control at each time point minus the population make up of each treatment.

Interesting how the orange line peaks in every single other tank, before leveling out close to control. With the exception of aqua forest and ocean direct, the yellow line had a much less pronounced inverse

The red circles show the treatments that stayed closest to the Control (Dry Rock & Sand) for the first 2 measurement points - 2 and 4 weeks.

Real Reef (artificial rock cured in seawater)

160 water (got 100% of initial water from the BRS 160 gallon tank)

and to a lesser extent, 900 Brick (the biobrick out of the sump of the BRS 900)

The Blue circles show those 3 treatments that ended the closest to the Control during the last two measurement points - 10 and 15 weeks.

Ocean Direct (bagged live sand)

Dark Rubble (rubble from an established tank placed in the dark overflow)

and the Real Reef (again)

(and others converged nearly as much, but I drew the line at 3 closest.)

One way to think about this is that the treatments that were similar to control in the first 2&4 weeks maybe didn't have a strong initial push in changing the early community from what it looks like when you just put clownfish in a dry rock/sand tank and feed them.

And likewise, those that converged to something very close to the dry rock control at the end may not have had a strong lasting push on how the community developed.

And the bag of ocean direct sand was in both groups.

Yeah, good call on the trends.Here's one way to slice Dan's data.

The red circles show the treatments that stayed closest to the Control (Dry Rock & Sand) for the first 2 measurement points - 2 and 4 weeks.

Real Reef (artificial rock cured in seawater)

160 water (got 100% of initial water from the BRS 160 gallon tank)

and to a lesser extent, 900 Brick (the biobrick out of the sump of the BRS 900)

The Blue circles show those 3 treatments that ended the closest to the Control during the last two measurement points - 10 and 15 weeks.

Ocean Direct (bagged live sand)

Dark Rubble (rubble from an established tank placed in the dark overflow)

and the Real Reef (again)

(and others converged nearly as much, but I drew the line at 3 closest.)

One way to think about this is that the treatments that were similar to control in the first 2&4 weeks maybe didn't have a strong initial push in changing the early community from what it looks like when you just put clownfish in a dry rock/sand tank and feed them.

And likewise, those that converged to something very close to the dry rock control at the end may not have had a strong lasting push on how the community developed.

And the bag of ocean direct sand was in both groups.

We’re did you guys managed to find the data to fill in the charts? Is there more data anywhere else besides the videos?Here's one way to slice Dan's data.

The red circles show the treatments that stayed closest to the Control (Dry Rock & Sand) for the first 2 measurement points - 2 and 4 weeks.

Real Reef (artificial rock cured in seawater)

160 water (got 100% of initial water from the BRS 160 gallon tank)

and to a lesser extent, 900 Brick (the biobrick out of the sump of the BRS 900)

The Blue circles show those 3 treatments that ended the closest to the Control during the last two measurement points - 10 and 15 weeks.

Ocean Direct (bagged live sand)

Dark Rubble (rubble from an established tank placed in the dark overflow)

and the Real Reef (again)

(and others converged nearly as much, but I drew the line at 3 closest.)

One way to think about this is that the treatments that were similar to control in the first 2&4 weeks maybe didn't have a strong initial push in changing the early community from what it looks like when you just put clownfish in a dry rock/sand tank and feed them.

And likewise, those that converged to something very close to the dry rock control at the end may not have had a strong lasting push on how the community developed.

And the bag of ocean direct sand was in both groups.

I tricked @Dan_P into thinking it was interesting enough that he took the image of the bar charts from the video and converted the major families into numerical data.We’re did you guys managed to find the data to fill in the charts? Is there more data anywhere else besides the videos?